4.2. Finite difference method#

4.2.1. Finite differences#

Another method of solving boundary-value problems (and also partial differential equations, as we’ll see later) involves finite differences, which are numerical approximations to exact derivatives.

Recall that the exact derivative of a function \(f(x)\) at some point \(x\) is defined as:

So, we can approximate this derivative using a finite difference (rather than an infinitesimal difference as in the exact derivative):

which involves some error. This is a forward difference for approximating the first derivative. We can also approximate the first derivative using a backward difference:

To understand the error involved in these differences, we can use Taylor’s theorem to obtain Taylor series expansions:

where the \(\mathcal{O}()\) notation stands for “order of magnitude of”. So, we can see that each of these approximations is first-order accurate.

# import libraries for numerical functions and plotting

import numpy as np

import matplotlib.pyplot as plt

# these lines are only for helping improve the display

import matplotlib_inline.backend_inline

matplotlib_inline.backend_inline.set_matplotlib_formats('pdf', 'png')

plt.rcParams['figure.dpi']= 300

plt.rcParams['savefig.dpi'] = 300

4.2.2. Second-order finite differences#

We can obtain higher-order approximations for the first derivative, and an approximations for the second derivative, by combining these Taylor series expansions:

Subtracting the Taylor series for \(f(x+\Delta x)\) by that for \(f(x-\Delta x)\) gives:

which is a second-order accurate approximation for the first derivative.

Adding the Taylor series for \(f(x+\Delta x)\) to that for \(f(x-\Delta x)\) gives:

which is a second-order accurate approximation for the second derivative.

4.2.3. Solving ODEs with finite differences#

We can use finite differences to solve ODEs by substituting them for exact derivatives, and then applying the equation at discrete locations in the domain. This gives us a system of simultaneous equations to solve.

For example, let’s consider the ODE

with the boundary conditions \(y(0) = 1\) and \(y(2) = 8\).

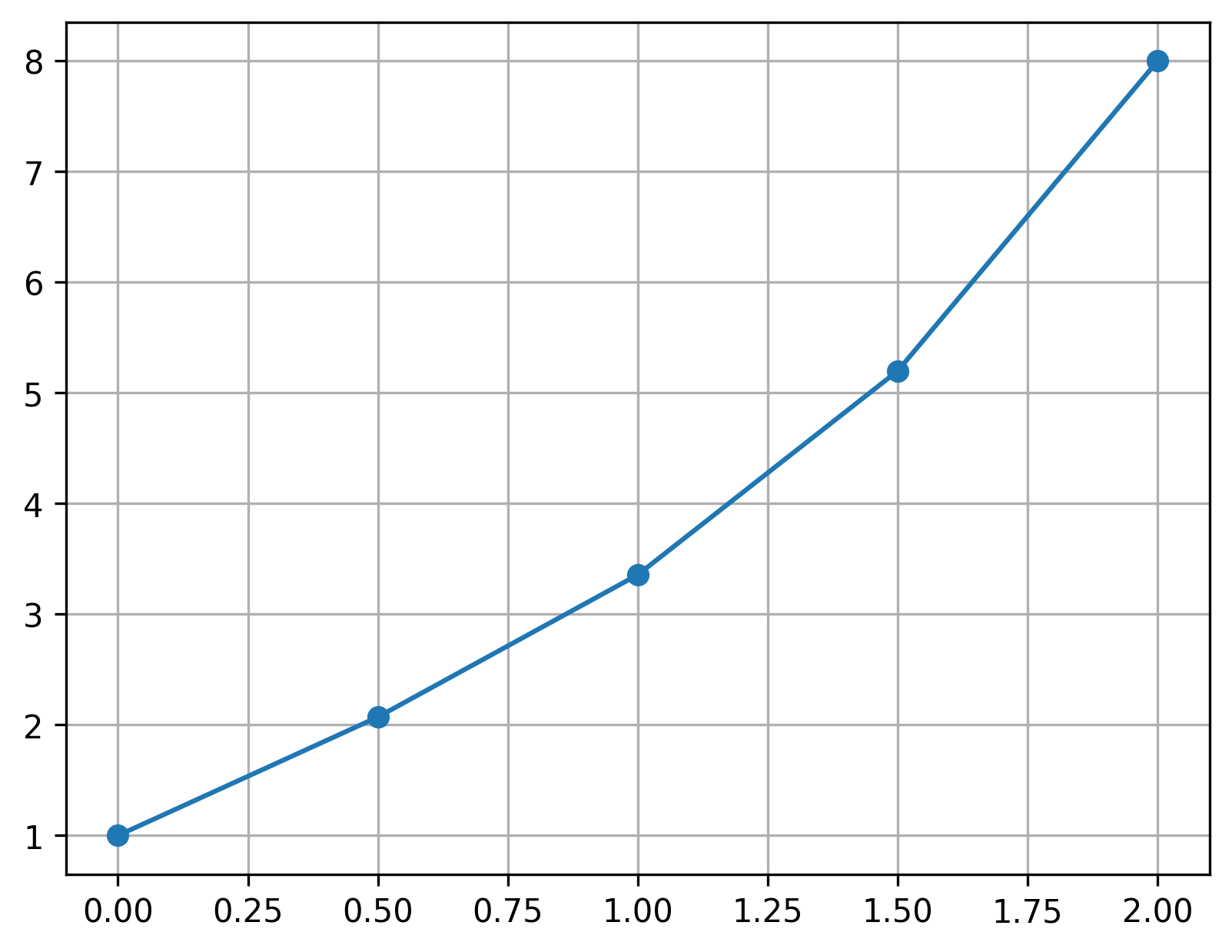

First, we discretize the continuous domain: divide it into a number of discrete segments. For now, let’s choose \(\Delta x = 0.5\), which creates four segments and thus five points: \(x_1 = 0, x_2 = 0.5, x_3 = 1.0, x_4 = 1.5, x_5 = 2.0\).

Our goal is then to find approximate values of \(y(x)\) at these points: \(y_1\) through \(y_5\). So, we have five unknowns, and need five equations to solve for them. We can use the ODE to provide these equations, by replacing the derivatives with finite differences, and applying the equation at particular discrete locations.

Recall that \(y(x)\) is a function just like \(f(x)\), and so we can apply the above finite difference equations to \(y(x)\) and \(y(x+\Delta x)\). Now that we have points, or nodes, at locations separated by \(\Delta x\), we can consider a point \(x_i\) where \(y(x_i) = y_i\), \(y(x_i + \Delta x) = y(x_{i+1}) = y_{i+1}\), and \(y(x_i - \Delta x) = y(x_{i-1}) = y_{i-1}\).

To do this, we’ll follow a few steps:

1.) Replace exact derivatives in the original ODE with finite differences, and apply the equation at a particular location \((x_i, y_i)\).

For our example, this gives:

which applies at location \((x_i, y_i)\).

2.) Next, rearrange the equation into a recursion formula:

We can use this equation to get an equation for each of the interior points in the domain.

For the first and last points—the boundary points—we already have equations, given by the boundary conditions.

3.) Set up system of linear equations

Applying the recursion formula to the interior points, and the boundary conditions for the boundary points, we can get a system of simultaneous linear equations:

This is a system of five equations and five unknowns, which we can solve! But, solving using substitution would be painful, so let’s represent this system of equations using a matrix and vectors:

or, more compactly, \(A \mathbf{y} = \mathbf{b}\).

4.) Solve the linear system of equations

The final step is just to solve. We can do this in Matlab with y = A \ b. (This is equivalent to y = inv(A)*b, but faster.)

A = np.array([

[1.0, 0, 0, 0, 0],

[0.875, -2.125, 1.125, 0, 0],

[0, 0.75, -2.25, 1.25, 0],

[0, 0, 0.625, -2.375, 1.375],

[0, 0, 0, 0, 1]

])

b = np.array([1.0, 0.25, 0.5, 0.75, 8.0])

x = np.linspace(0, 2, 5)

y = np.linalg.solve(A, b)

plt.plot(x, y, 'o-')

plt.grid()

plt.show()

4.2.3.1. General implementation#

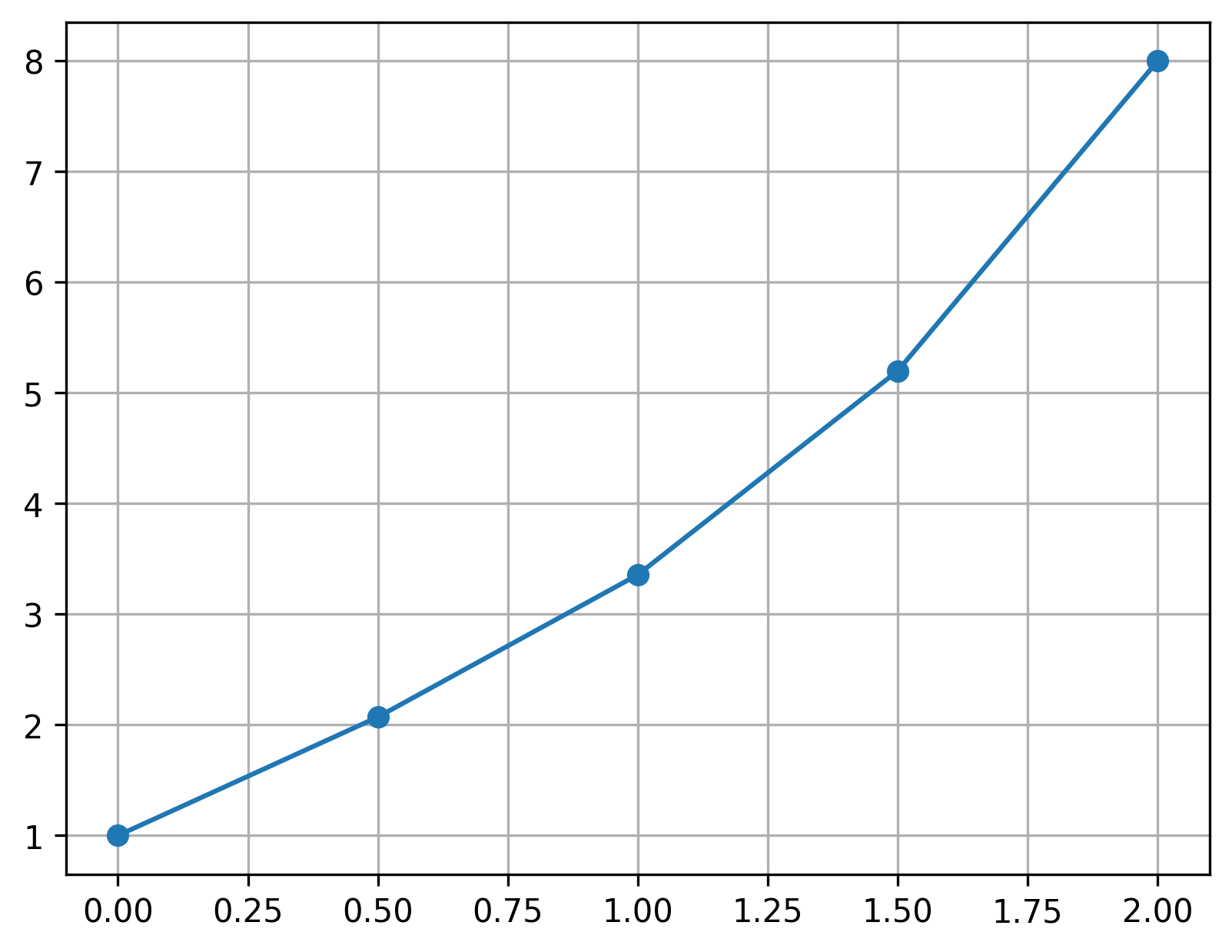

Of course, all of this will be easier if we implement in Python in a general way. We’ll use a for loop to populate the coefficient matrix \(A\) and right-hand-side vector \(\mathbf{b}\):

dx = 0.5

x_vals = np.arange(0, 2.01, dx)

A = np.zeros((len(x_vals), len(x_vals)))

b = np.zeros_like(x_vals)

for idx, x in enumerate(x_vals):

if idx == 0:

A[0,0] = 1

b[0] = 1

elif idx == len(x_vals) - 1:

A[-1,-1] = 1

b[-1] = 8

else:

A[idx, idx-1] = 1 - x*dx/2

A[idx, idx] = -2 - x*dx**2

A[idx, idx+1] = 1 + x*dx/2

b[idx] = 2 * x * dx**2

y_vals = np.linalg.solve(A, b)

plt.plot(x_vals, y_vals, 'o-')

plt.grid()

plt.show()

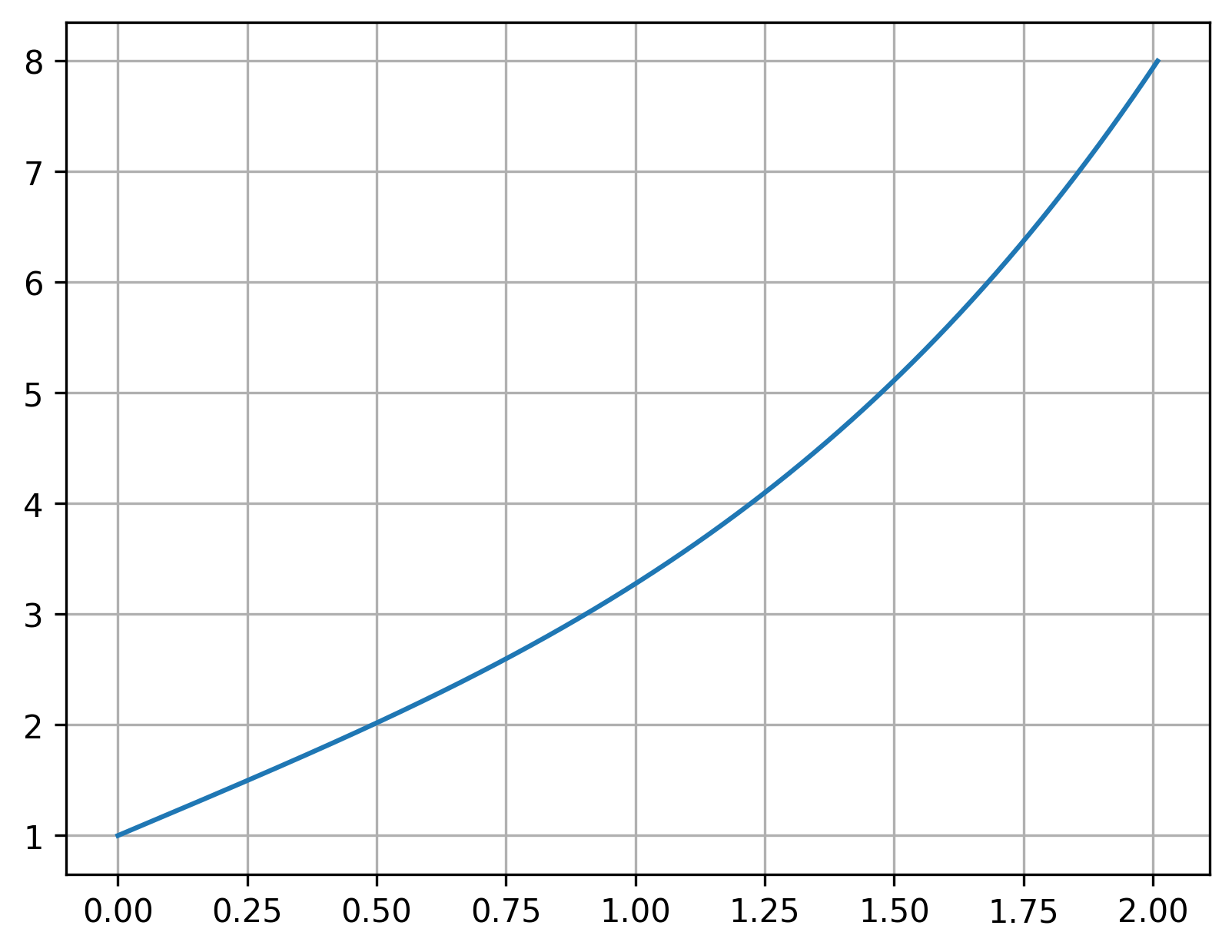

This looks good, but we can get a more-accurate solution by reducing our step size \(\Delta x\):

dx = 0.001

x_vals = np.arange(0, 2.01, dx)

A = np.zeros((len(x_vals), len(x_vals)))

b = np.zeros_like(x_vals)

for idx, x in enumerate(x_vals):

if idx == 0:

A[0,0] = 1

b[0] = 1

elif idx == len(x_vals) - 1:

A[-1,-1] = 1

b[-1] = 8

else:

A[idx, idx-1] = 1 - x*dx/2

A[idx, idx] = -2 - x*dx**2

A[idx, idx+1] = 1 + x*dx/2

b[idx] = 2 * x * dx**2

y_vals = np.linalg.solve(A, b)

plt.plot(x_vals, y_vals)

plt.grid()

plt.show()

4.2.4. Boundary conditions#

We will encounter four main kinds of boundary conditions. Consider the ODE \(y^{\prime\prime} + y = 0\), on the domain \(0 \leq x \leq L\).

First type, or Dirichlet, boundary conditions specify fixed values of \(y\) at the boundaries: \(y(0) = a\) and \(y(L) = b\).

Second type, or Neumann, boundary conditions specify values of the derivative at the boundaries: \(y^{\prime}(0) = a\) and \(y^{\prime}(L) = b\).

Third type, or Robin, boundary conditions specify a linear combination of the function value and its derivative at the boundaries: \(a \, y(0) + b \, y^{\prime}(0) = g(0)\) and \(a \, y(L) + b \, y^{\prime}(L) = g(L)\), where \(g(x)\) is some function.

Mixed boundary conditions, which combine any of these three at the different boundaries. For example, we could have \(y(0) = a\) and \(y^{\prime}(L) = b\).

Whichever type of boundary condition we are dealing with, the goal will be to construct an equation representing the boundary condition to incorporate in our system of equations.

If we have a fixed value boundary condition, such as \(y(0) = a\), then this equation is straightforward:

where \(y_1\) is the first point in the grid of points, corresponding to \(x_1 = 0\). (We saw this in the example above.) In Python, we can implement this equation with

A[0,0] = 1

b[0] = a

If we have a fixed derivative boundary condition, such as \(y^{\prime}(0) = 0\), then we need to use a finite difference to represent the derivative. When the boundary condition is at the starting location, \(x=0\), the easiest way to do this is with a forward difference:

We can implement this in Python with

A[0,0] = -1

A[0,1] = 1

b[0] = 0

When we have this sort of derivative boundary condition at the right side of the domain, at \(x=L\), then we can use a backward difference to represent the derivative:

where \(y_n\) is the final point (\(x_n = L\)) and \(y_{n-1}\) is the second-to-last point (\(x_{n-1} = L - \Delta x\)). We can implement this with

A[-1,-2] = -1

A[-1,-1] = 1

b[-1] = 0

If we have a linear combination of a fixed value and fixed derivative, like \(a \, y(0) + b \, y^{\prime}(0) = c\), then we can combine the above approaches using a forward difference:

and in Python:

A[0,0] = a*dx - b

A[0,1](1,2) = b

b[0] = c * dx

4.2.4.1. Using central differences for derivative BCs#

When a boundary condition involves a derivative, we can use a central difference to approximate the first derivative; this is more accurate than a forward or backward difference.

Consider the formula for a central difference at \(x=0\), applied for the boundary condition \(y^{\prime}(0) = 0\):

where \(y_0\) is an imaginary, or ghost, node outside the domain. We can’t actually keep this point in our implementation, because it isn’t a real point.

We still need an equation for the point at the boundary, \(y_1\). To get this, we’ll apply the regular recursion formula, normally used at interior points:

where \(a\), \(b\), \(c\), and \(f(x)\) depend on the problem. Normally we wouldn’t use this at the boundary node, \(y_1\), because it references a point outside the domain to the left—but we have an equation for that! From above, based on the boundary condition, we have \(y_0 = y_2\). If we incorporate that into the recursion formula, we can eliminate the ghost node \(y_0\):

which is the equation we can actually use at the boundary point. In Python, this looks like

A[0,0] = b

A[0,1] = a + c

b[0] = f(x[0])

4.2.5. Example: nonlinear BVP#

So far we’ve seen how to handle a linear boundary value problem, but what if we have a nonlinear BVP? This is going to be trickier, because our work so far relies on using linear algebra to solve the system of (linear) equations.

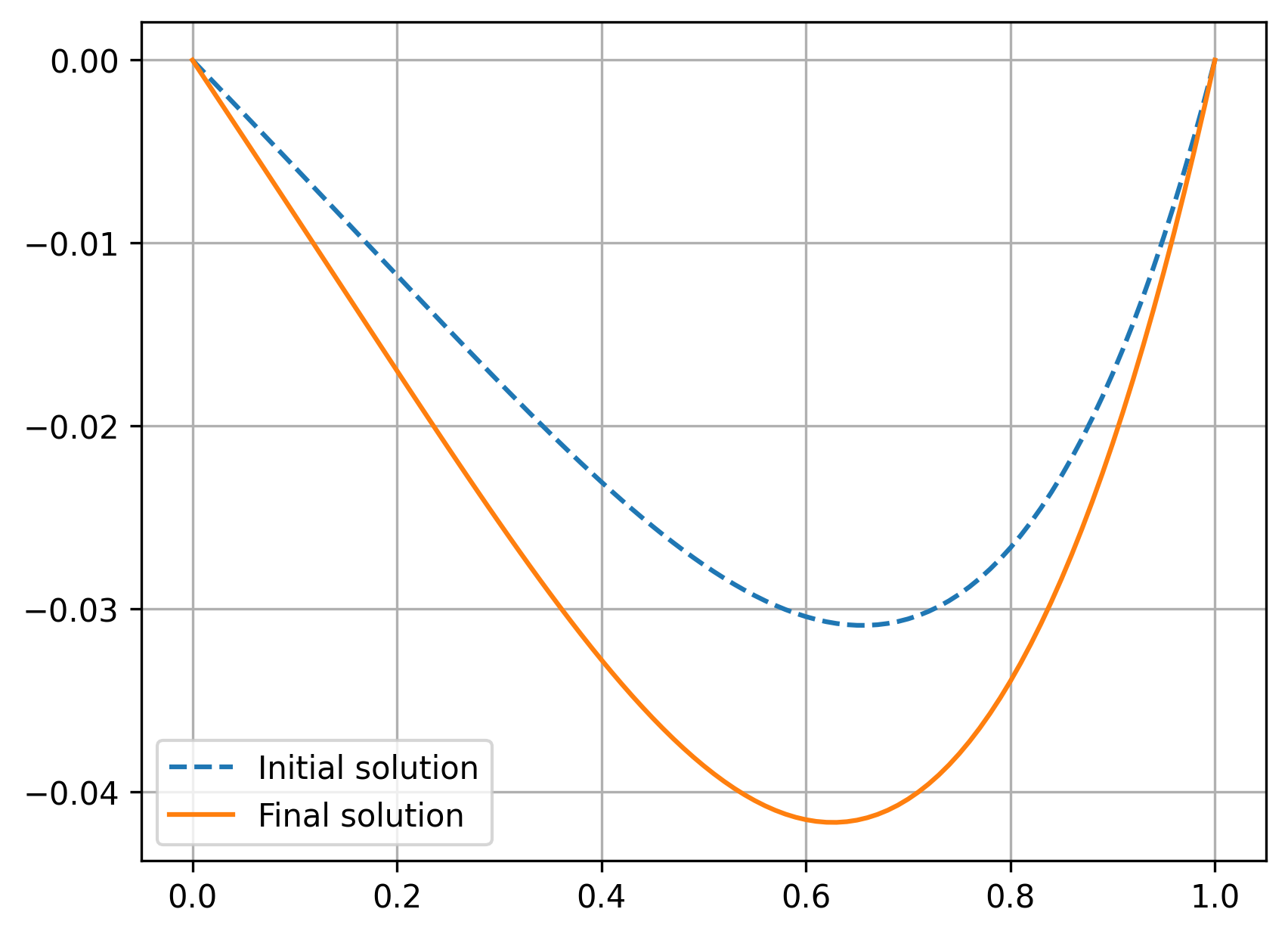

For example, consider the 2nd-order ODE

with the boundary conditions \(y(0) = y(1) = 0\). This is nonlinear, due to the \(y^3\) term on the right-hand side.

To solve this, let’s first convert it into a discrete form, by replacing the second derivative with a finite difference and any \(x\)/\(y\) present with \(x_i\) and \(y_i\). We’ll also move any constants (i.e., terms that don’t contain \(y_i\)) and the nonlinear term to the right-hand side:

where the boundary conditions are now \(y_1 = 0\) and \(y_n = 0\), with \(n\) as the number of grid points. We can rearrange and simplify into our recursion formula:

The question is: how do we solve this now? The nonlinear term involving \(y_i^3\) on the right-hand side complicates things, but we know how to set up and solve this without the nonlinear term. We can use an approach known as successive iteration:

Solve the ODE without the nonlinear term to get an initial “guess” to the solution for \(y\).

Then, incorporate that guess solution in the nonlinear term on the right-hand side, treating it as a constant. We can call this \(y_{\text{old}}\). Then, solve the full system for a new \(y\) solution.

Check whether the new \(y\) matches \(y_{\text{old}}\) with some tolerance. For example, check whether \(\max\left(\left| y - y_{\text{old}} \right| \right) < \) some tolerance, such as \(10^{-6}\). If this is true, then we can consider the solution converged. If it is not true, then set \(y_{\text{old}} = y\), and repeat the process starting at step 2.

Let’s implement that process in Python:

dx = 0.01

x_vals = np.arange(0, 1.001, dx)

A = np.zeros((len(x_vals), len(x_vals)))

b = np.zeros(len(x_vals))

# First, solve the problem without the nonlinear term:

for idx, x in enumerate(x_vals):

if idx == 0:

# x = 0 boundary condition

A[0,0] = 1

b[0] = 0

elif idx == len(x_vals) - 1:

# x = L boundary condition

A[-1,-1] = 1

b[-1] = 0

else:

# interior nodes, use recursion formula

A[idx, idx-1] = 1

A[idx,idx] = -2 - 3*dx**2

A[idx, idx+1] = 1

b[idx] = x**2 * dx**2

# get solution without nonlinear term

y_vals = np.linalg.solve(A, b)

plt.plot(x_vals, y_vals, '--', label='Initial solution')

# Now, set up iterative process to solve while

# incorporating nonlinear terms

iters = 1

y_old = np.zeros_like(x_vals)

while np.max(np.abs(y_vals - y_old)) > 1e-6:

y_old[:] = y_vals[:]

# A matrix is not changed, but the b vector does

for idx, x in enumerate(x_vals):

if idx > 0 and idx < len(x_vals)-1:

b[idx] = x**2 * dx**2 + 100*(dx**2)*y_old[idx]**2

y_vals = np.linalg.solve(A, b)

iters += 1

print(f'Number of iterations: {iters}')

plt.plot(x_vals, y_vals, label='Final solution')

plt.legend()

plt.grid()

plt.show()

Number of iterations: 16

Another option is just to set our “guess” for the \(y\) solution to be zero, rather than solve the problem in two steps:

dx = 0.01

x_vals = np.arange(0, 1.001, dx)

A = np.zeros((len(x_vals), len(x_vals)))

b = np.zeros_like(x_vals)

# Set up the coefficient matrix, which does not change

for idx, x in enumerate(x_vals):

if idx == 0:

# x = 0 boundary condition

A[0,0] = 1

b[0] = 0

elif idx == len(x_vals) - 1:

# x = L boundary condition

A[-1,-1] = 1

b[-1] = 0

else:

# interior nodes, use recursion formula

A[idx, idx-1] = 1

A[idx,idx] = -2 - 3*dx**2

A[idx, idx+1] = 1

b[idx] = x**2 * dx**2

# just use zeros as our initial guess for the solution

y_vals = np.zeros_like(x_vals)

# Successive iteration

iters = 1

# setting this to some random values, just to enter the while loop

y_old = 100 * np.random.rand(len(x_vals))

while np.max(np.abs(y_vals - y_old)) > 1e-6:

y_old[:] = y_vals[:]

# A matrix is not changed, but the b vector does

for idx, x in enumerate(x_vals):

if idx > 0 and idx < len(x_vals)-1:

b[idx] = x**2 * dx**2 + 100*(dx**2)*y_old[idx]**2

y_vals = np.linalg.solve(A, b)

iters += 1

print(f'Number of iterations: {iters}')

Number of iterations: 17

This made our process take slightly more iterations, because the initial guess was slightly further away from the final solution. For other problems, having a bad initial guess could make the process take much longer, so coming up with a good initial guess may be important.

4.2.6. Example: heat transfer through a fin#

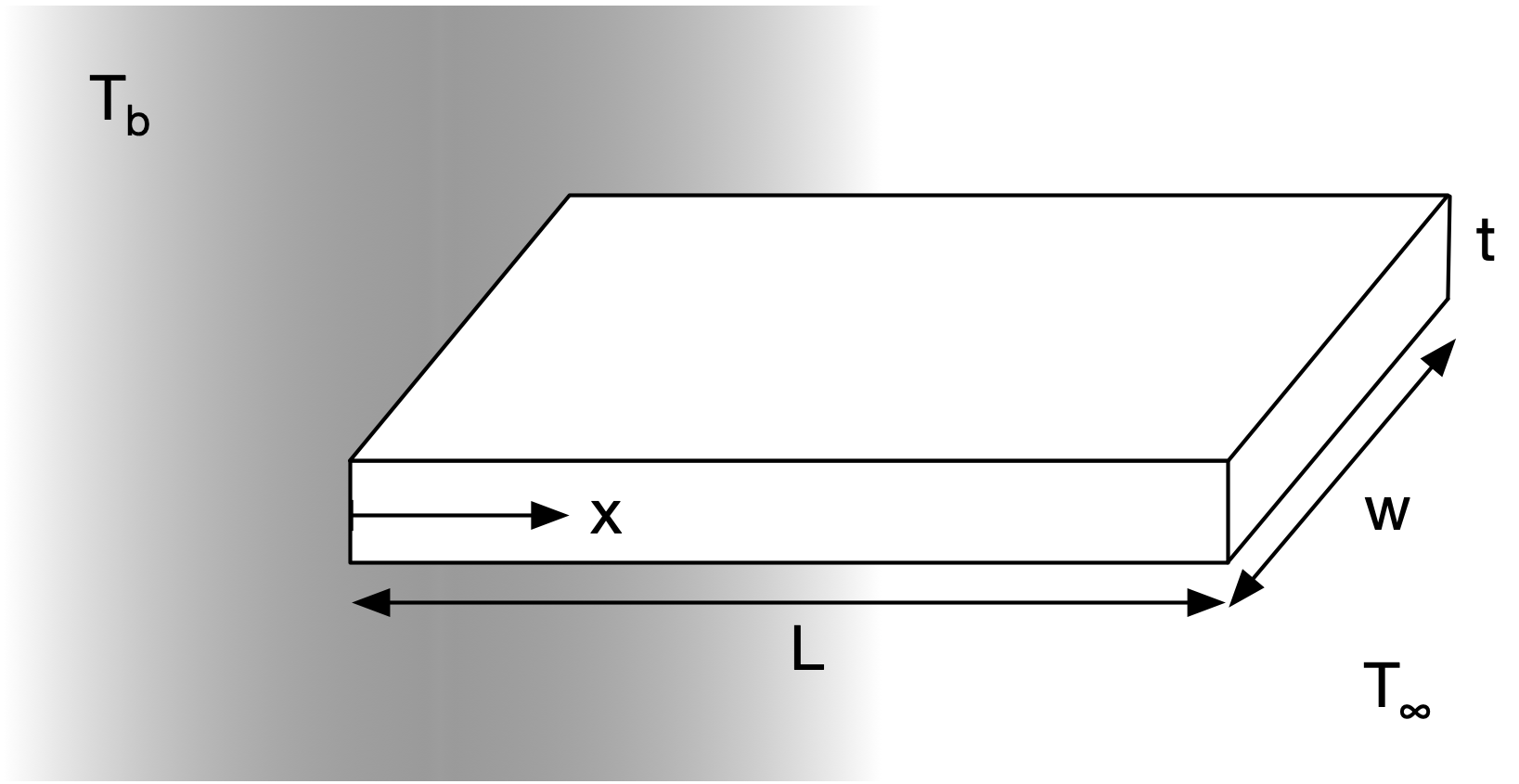

Let’s now consider a more complicated example: heat transfer through an extended surface (a fin).

Fig. 4.2 Geometry of a heat transfer fin#

In this situation, we have the temperature of the body \(T_b\), the temperature of the ambient fluid \(T_{\infty}\); the length \(L\), width \(w\), and thickness \(t\) of the fin; the thermal conductivity of the fin material \(k\); and convection heat transfer coefficient \(h\).

The boundary conditions can be defined in different ways, but generally we can say that the temperature of the fin at the wall is the same as the body temperature, and that the fin is insulated at the tip. This gives us

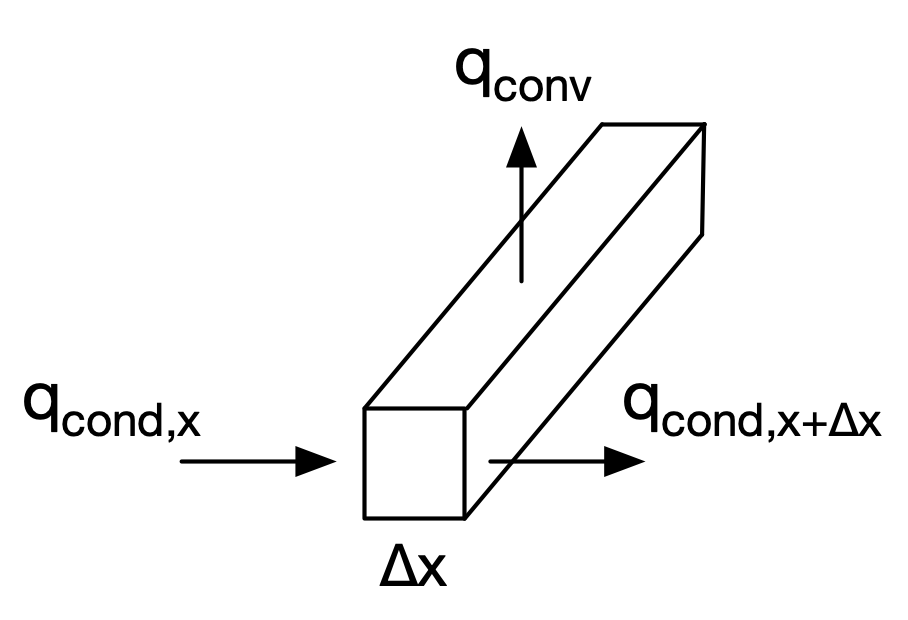

Our goal is to solve for the temperature distribution \(T(x)\). To do this, we need to set up a governing differential equation. Let’s do a control volume analysis of heat transfer through the fin:

Fig. 4.3 Control volume for heat transfer through the fin#

Given a particular volumetric slice of the fin, we can define the heat transfer rates of conduction through the fin and convection from the fin to the air:

where \(P\) is the perimeter (so that \(P \, dx\) is the heat transfer area to the fluid) and \(A_c\) is the cross-sectional area.

Performing a balance through the control volume:

then we have as a governing equation

where \(m^2 = (h P)/(k A_c)\).

We can obtain an exact solution for this ODE. For convenience, let’s define a new variable, \(\theta\), which is a normalized temperature:

where \(\theta^{\prime} = T^{\prime}\) and \(\theta^{\prime\prime} = T^{\prime\prime}\). This gives us a new governing equation:

This is a 2nd-order homogeneous ODE, which looks a lot like \(y^{\prime\prime} + a y = 0\). The exact solution is then

We’ll use this to look at the accuracy of a numerical solution, but we will not be able to find an exact solution for more complicated versions of this problem.

We can also solve this numerically using the finite difference method. Let’s replace the derivative with a finite difference:

which we can rearrange into a recursion formula:

This gives us an equation for all the interior nodes; we can use the above boundary conditions to get equations for the boundary nodes. For the boundary condition at \(x=L\), \(T^{\prime}(x=L) = 0\), let’s use a backward difference:

Combining all these equations, we can construct a linear system: \(A \mathbf{T} = \mathbf{b}\).

4.2.6.1. Heat transfer with radiation#

Let’s now consider a more-complicated case, where we also have radiation heat transfer occuring along the length of the fin. Now, our governing ODE is

This is a bit trickier to solve because of the nonlinear term involving \(T^4\). But, we can handle it via the iterative solution method discussed above.